该内容为一场人脸关键点检测竞赛的解决方案。使用5千张带标注的人脸图像训练模型,识别4个关键点。采用ResNet18模型,调整输入层和输出层适配单通道图像及8个坐标值。通过数据扩增、K折交叉验证训练多模型,最终集成预测,以MAE评估性能,提升检测精度。

☞☞☞AI 智能聊天, 问答助手, AI 智能搜索, 免费无限量使用 DeepSeek R1 模型☜☜☜

赛题介绍

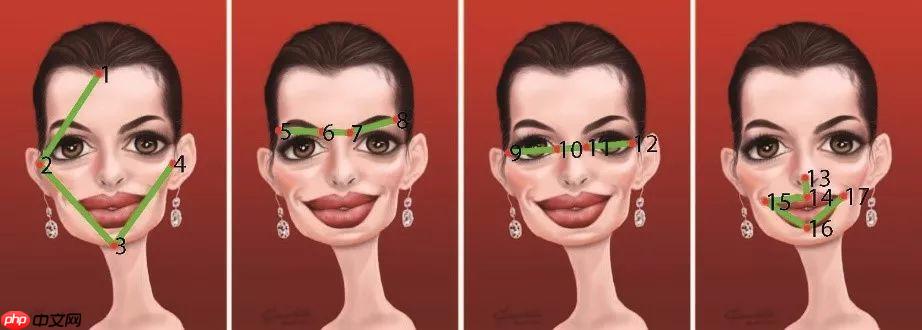

人脸识别是基于人的面部特征信息进行身份识别的一种生物识别技术,金融和安防是目前人脸识别应用最广泛的两个领域。人脸关键点是人脸识别中的关键技术。人脸关键点检测需要识别出人脸的指定位置坐标,例如眉毛、眼睛、鼻子、嘴巴和脸部轮廓等位置坐标等。

赛事任务

给定人脸图像,找到4个人脸关键点,赛题任务可以视为一个关键点检测问题。

训练集:5千张人脸图像,并且给定了具体的人脸关键点标注。

测试集:约2千张人脸图像,需要选手识别出具体的关键点位置。

数据说明

赛题数据由训练集和测试集组成,train.csv为训练集标注数据,train.npy和test.npy为训练集图片和测试集图片,可以使用numpy.load进行读取。

train.csv的信息为左眼坐标、右眼坐标、鼻子坐标和嘴巴坐标,总共8个点。

left_eye_center_x,left_eye_center_y,right_eye_center_x,right_eye_center_y,nose_tip_x,nose_tip_y,mouth_center_bottom_lip_x,mouth_center_bottom_lip_y66.3423640449,38.5236134831,28.9308404494,35.5777725843,49.256844943800004,68.2759550562,47.783946067399995,85.361582022568.9126037736,31.409116981100002,29.652226415100003,33.0280754717,51.913358490600004,48.408452830200005,50.6988679245,79.574037735868.7089943925,40.371149158899996,27.1308201869,40.9406803738,44.5025226168,69.9884859813,45.9264269159,86.2210093458

评审规则

本次竞赛的评价标准回归MAE进行评价,数值越小性能更优,最高分为0。评估代码参考:

from sklearn.metrics import mean_absolute_error y_true = [3, -0.5, 2, 7] y_pred = [2.5, 0.0, 2, 8] mean_absolute_error(y_true, y_pred)

!echo y | unzip -O CP936 /home/aistudio/data/data117050/人脸关键点检测挑战赛_数据集.zip!mv 人脸关键点检测挑战赛_数据集/* ./ !echo y | unzip test.npy.zip!echo y | unzip train.npy.zip

Archive: /home/aistudio/data/data117050/人脸关键点检测挑战赛_数据集.zip inflating: 人脸关键点检测挑战赛_数据集/sample_submit.csv inflating: 人脸关键点检测挑战赛_数据集/test.npy.zip inflating: 人脸关键点检测挑战赛_数据集/train.csv inflating: 人脸关键点检测挑战赛_数据集/train.npy.zip Archive: test.npy.zip replace test.npy? [y]es, [n]o, [A]ll, [N]one, [r]ename: inflating: test.npy Archive: train.npy.zip replace train.npy? [y]es, [n]o, [A]ll, [N]one, [r]ename: inflating: train.npy

!pip install qudida scikit-image albumentations --no-deps

Looking in indexes: https://pypi.tuna.tsinghua.edu.cn/simple Requirement already satisfied: qudida in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (0.0.4) Requirement already satisfied: scikit-image in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (0.18.3) Requirement already satisfied: albumentations in /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages (1.1.0)

- train.csv:存储的是八个关键点的坐标。

- train.npy:训练集图像

- test.npy:测试集图像

import pandas as pdimport numpy as npimport warnings

warnings.filterwarnings("ignore")

%pylab inline# 读取数据集标注train_df = pd.read_csv('train.csv')

train_df = train_df.fillna(48)

train_df.head()

Populating the interactive namespace from numpy and matplotlib

left_eye_center_x left_eye_center_y right_eye_center_x \ 0 66.342364 38.523613 28.930840 1 68.912604 31.409117 29.652226 2 68.708994 40.371149 27.130820 3 65.334176 35.471878 29.366461 4 68.634857 29.999486 31.094571 right_eye_center_y nose_tip_x nose_tip_y mouth_center_bottom_lip_x \ 0 35.577773 49.256845 68.275955 47.783946 1 33.028075 51.913358 48.408453 50.698868 2 40.940680 44.502523 69.988486 45.926427 3 37.767684 50.411373 64.934767 50.028780 4 29.616429 50.247429 51.450857 47.948571 mouth_center_bottom_lip_y 0 85.361582 1 79.574038 2 86.221009 3 74.883241 4 84.394286

# 读取图片,整理为npy格式train_img = np.load('train.npy')

test_img = np.load('test.npy')# 图像数据通道转换train_img = np.transpose(train_img, [2, 0, 1])

train_img = train_img.reshape(-1, 1, 96, 96)

test_img = np.transpose(test_img, [2, 0, 1])

test_img = test_img.reshape(-1, 1, 96, 96)print(train_img.shape, test_img.shape)

(5000, 1, 96, 96) (2049, 1, 96, 96)

步骤1:加载预训练模型

import paddlefrom paddle.vision.models import resnet18# 构建模型,这里直接修改预训练模型网络结构def make_model():

model = resnet18(pretrained=True)

model.conv1 = paddle.nn.Conv2D(1, 64, kernel_size=[7, 7], stride=[2, 2], padding=3, data_format='NCHW')

model.fc = paddle.nn.Linear(512, 8) return model

model = make_model()

x = paddle.rand([1, 1, 224, 224])

out = model(x)print(out.shape)

W1117 14:26:05.379549 15030 device_context.cc:447] Please NOTE: device: 0, GPU Compute Capability: 7.0, Driver API Version: 11.0, Runtime API Version: 10.1

W1117 14:26:05.384275 15030 device_context.cc:465] device: 0, cuDNN Version: 7.6.

INFO:paddle.utils.download:unique_endpoints {''}

INFO:paddle.utils.download:File /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams md5 checking...

INFO:paddle.utils.download:Found /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams

[1, 8]

步骤2:定义数据扩增

%pylab inline idx = 400xy = train_df.iloc[idx].values.reshape(-1, 2) plt.scatter(xy[:1, 0], xy[:1, 1]) plt.imshow(train_img[idx, 0, :, :], cmap='gray')

Populating the interactive namespace from numpy and matplotlib

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/matplotlib/cbook/__init__.py:2349: DeprecationWarning: Using or importing the ABCs from 'collections' instead of from 'collections.abc' is deprecated, and in 3.8 it will stop working if isinstance(obj, collections.Iterator): /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/matplotlib/cbook/__init__.py:2366: DeprecationWarning: Using or importing the ABCs from 'collections' instead of from 'collections.abc' is deprecated, and in 3.8 it will stop working return list(data) if isinstance(data, collections.MappingView) else data

<matplotlib.image.AxesImage at 0x7f8000047ad0>

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/matplotlib/image.py:425: DeprecationWarning: np.asscalar(a) is deprecated since NumPy v1.16, use a.item() instead a_min = np.asscalar(a_min.astype(scaled_dtype)) /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/matplotlib/image.py:426: DeprecationWarning: np.asscalar(a) is deprecated since NumPy v1.16, use a.item() instead a_max = np.asscalar(a_max.astype(scaled_dtype))

<Figure size 432x288 with 1 Axes>

import albumentations as A# 数据扩增方法,需要考虑到关键点的变换transform = A.Compose(

[A.HorizontalFlip(p=1)],

keypoint_params=A.KeypointParams(format='xy')

)

transformed = transform(image=train_img[idx, 0, :, :], keypoints=xy)

transformed['keypoints'] = np.array(transformed['keypoints'])

plt.scatter(transformed['keypoints'][1, 0], transformed['keypoints'][1, 1])

plt.imshow(transformed['image'], cmap='gray')

<matplotlib.image.AxesImage at 0x7f7e9b4cdc10>

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/matplotlib/image.py:425: DeprecationWarning: np.asscalar(a) is deprecated since NumPy v1.16, use a.item() instead a_min = np.asscalar(a_min.astype(scaled_dtype)) /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/matplotlib/image.py:426: DeprecationWarning: np.asscalar(a) is deprecated since NumPy v1.16, use a.item() instead a_max = np.asscalar(a_max.astype(scaled_dtype))

<Figure size 432x288 with 1 Axes>

步骤3:定义数据集

from paddle.io import DataLoader, Datasetfrom PIL import Image# 自定义数据集class MyDataset(Dataset):

def __init__(self, img, keypoint, train=True):

super(MyDataset, self).__init__()

self.img = img

self.keypoint = keypoint

self.train = train

self.transform = A.Compose([

A.Resize(96, 96),

])

# 关键点数据扩增

self.transform1 = A.Compose([

A.Resize(96, 96),

A.HorizontalFlip(p=1),

A.RandomContrast(p=1),

A.RandomBrightnessContrast(p=1)

], keypoint_params=A.KeypointParams(format='xy'))

self.transform2 = A.Compose([

A.Blur(p=0.5),

A.RandomBrightnessContrast(p=0.5),

A.RandomContrast(p=0.5),

A.Resize(96, 96),

], keypoint_params=A.KeypointParams(format='xy'))

def __getitem__(self, index):

# 下面代码为关键点数据扩增具体处理步骤

# 为增加模型精度,这里有2中数据扩增方法

if self.train:

rnd = np.random.randint(10) if rnd > 5:

img = self.transform1(image=self.img[index, 0, :, :].astype(np.uint8),

keypoints=self.keypoint[index].reshape(-1, 2))

xy = img['keypoints']

img = img['image'].astype(np.float32)/255

xy = np.array(xy).astype(np.float32)

xy = xy[[1, 0, 2, 3]] else:

img = self.transform2(image=self.img[index, 0, :, :].astype(np.uint8),

keypoints=self.keypoint[index].reshape(-1, 2))

xy = img['keypoints']

img = img['image'].astype(np.float32)/255

xy = np.array(xy).astype(np.float32)

return img.reshape(1, 96, 96), paddle.to_tensor(xy.flatten() / 96) else:

img = Image.fromarray(self.img[index, 0, :, :])

img = np.asarray(img).astype(np.float32)/255

return img.reshape(1, 96, 96), self.keypoint[index] / 96.0

def __len__(self):

return len(self.keypoint)# 训练集train_dataset = MyDataset(

train_img[:-500, :, :, :],

train_df.values[:-500].astype(np.float32), True)

train_loader = DataLoader(train_dataset, batch_size=64, shuffle=True)# 验证集val_dataset = MyDataset(

train_img[-500:, :, :, :],

train_df.values[-500:].astype(np.float32), False)

val_loader = DataLoader(val_dataset, batch_size=64, shuffle=False)# 测试集test_dataset = MyDataset(

test_img[:, :, :],

np.zeros((test_img.shape[0], 8)), False)

test_loader = DataLoader(test_dataset, batch_size=64, shuffle=False)

步骤4:单折模型训练

# 定义损失函数和评价函数optimizer = paddle.optimizer.Adam(parameters=model.parameters(), learning_rate=0.005)

criterion = paddle.nn.L1Loss(reduction='mean')from sklearn.metrics import mean_absolute_errorfor epoch in range(0, 20):

Train_Loss, Val_Loss = [], []

Train_MAE, Val_MAE = [], [] # 模型训练

model.train() for i, (x, y) in enumerate(train_loader):

pred = model(x)

loss = criterion(pred, y)

Train_Loss.append(loss.item())

loss.backward()

optimizer.step()

optimizer.clear_grad()

Train_MAE.append(mean_absolute_error(y.numpy(), pred.numpy()) * 96)

# 模型预测

model.eval() for i, (x, y) in enumerate(val_loader):

pred = model(x)

loss = criterion(pred, y)

Val_Loss.append(loss.item())

Val_MAE.append(mean_absolute_error(y.numpy(), pred.numpy()) * 96)

if epoch % 1 == 0: print(f'\nEpoch: {epoch}') print(f'Loss {np.mean(Train_Loss):3.5f}/{np.mean(Val_Loss):3.5f} MAE {np.mean(Train_MAE):3.5f}/{np.mean(Val_MAE):3.5f}')

Epoch: 0 Loss 0.37203/0.11440 MAE 35.71535/10.98286 Epoch: 1 Loss 0.09795/0.06849 MAE 9.40349/6.57502 Epoch: 2 Loss 0.07905/0.05564 MAE 7.58880/5.34112 Epoch: 3 Loss 0.07309/0.05452 MAE 7.01674/5.23387 Epoch: 4 Loss 0.06922/0.07734 MAE 6.64545/7.42501 Epoch: 5 Loss 0.05536/0.05599 MAE 5.31448/5.37539 Epoch: 6 Loss 0.06123/0.04331 MAE 5.87844/4.15806 Epoch: 7 Loss 0.05611/0.04777 MAE 5.38680/4.58609 Epoch: 8 Loss 0.05519/0.06417 MAE 5.29804/6.16067 Epoch: 9 Loss 0.05200/0.06752 MAE 4.99160/6.48194 Epoch: 10 Loss 0.05854/0.05403 MAE 5.61967/5.18692 Epoch: 11 Loss 0.05658/0.29385 MAE 5.43145/28.20921 Epoch: 12 Loss 0.05763/0.03205 MAE 5.53287/3.07668 Epoch: 13 Loss 0.05338/3.77058 MAE 5.12412425/361.97535 Epoch: 14 Loss 0.06302/0.04331 MAE 6.04996/4.15809 Epoch: 15 Loss 0.05038/0.04483 MAE 4.83634/4.30353 Epoch: 16 Loss 0.05369/0.06514 MAE 5.15467/6.25333 Epoch: 17 Loss 0.04897/0.04639 MAE 4.70134/4.45311 Epoch: 18 Loss 0.05506/0.21816 MAE 5.28606/20.94297 Epoch: 19 Loss 0.06594/0.04694 MAE 6.32994/4.50641

# 测试集预测def make_predict(model, loader):

model.eval()

predict_list = [] for i, (x, y) in enumerate(loader):

pred = model(x)

predict_list.append(pred.numpy()) return np.vstack(predict_list)

test_pred = make_predict(model, test_loader) * 96

len(train_loader)

71

# 测试集可视化idx = 42xy = test_pred[idx, :].reshape(-1, 2) plt.scatter(xy[:, 0], xy[:, 1], c='r') plt.imshow(test_img[idx, 0, :, :], cmap='gray')

<matplotlib.image.AxesImage at 0x7f7e99c14cd0>

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/matplotlib/image.py:425: DeprecationWarning: np.asscalar(a) is deprecated since NumPy v1.16, use a.item() instead a_min = np.asscalar(a_min.astype(scaled_dtype)) /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/matplotlib/image.py:426: DeprecationWarning: np.asscalar(a) is deprecated since NumPy v1.16, use a.item() instead a_max = np.asscalar(a_max.astype(scaled_dtype))

<Figure size 432x288 with 1 Axes>

步骤5:多折模型训练

from sklearn.model_selection import KFold

kf = KFold(n_splits=6)# 多折训练for idx, (train_idx, val_idx) in enumerate(kf.split(train_img, train_img)):

# 每折训练集

train_dataset = MyDataset(

train_img[train_idx, :, :, :],

train_df.values[train_idx].astype(np.float32), True

)

train_loader = DataLoader(train_dataset, batch_size=64, shuffle=True) # 每折验证集

val_dataset = MyDataset(

train_img[val_idx, :, :, :],

train_df.values[val_idx].astype(np.float32), False

)

val_loader = DataLoader(val_dataset, batch_size=64, shuffle=False)

test_dataset = MyDataset(

test_img[:, :, :],

np.zeros((test_img.shape[0], 8)), False

)

test_loader = DataLoader(test_dataset, batch_size=64, shuffle=False)

model = make_model()

optimizer = paddle.optimizer.Adam(parameters=model.parameters(), learning_rate=0.005)

criterion = paddle.nn.MSELoss(reduction='mean') # 模型训练

best_mae = 1000

from sklearn.metrics import mean_absolute_error for epoch in range(0, 20):

Train_Loss, Val_Loss = [], []

Train_MAE, Val_MAE = [], []

model.train() for i, (x, y) in enumerate(train_loader):

pred = model(x)

loss = criterion(pred, y)

Train_Loss.append(loss.item())

loss.backward()

optimizer.step()

optimizer.clear_grad()

Train_MAE.append(mean_absolute_error(y.numpy(), pred.numpy()) * 96)

model.eval() for i, (x, y) in enumerate(val_loader):

pred = model(x)

loss = criterion(pred, y)

Val_Loss.append(loss.item())

Val_MAE.append(mean_absolute_error(y.numpy(), pred.numpy()) * 96)

if best_mae > np.mean(Val_MAE):

paddle.save(model.state_dict(), f"model_{idx}.pdparams") print(f'\nFold {idx+1} Epoch: {epoch}') print(f'Loss {np.mean(Train_Loss):3.5f}/{np.mean(Val_Loss):3.5f} MAE {np.mean(Train_MAE):3.5f}/{np.mean(Val_MAE):3.5f}')

INFO:paddle.utils.download:unique_endpoints {''}

INFO:paddle.utils.download:File /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams md5 checking...

INFO:paddle.utils.download:Found /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams

Fold 1 Epoch: 0 Loss 0.94416/0.00426 MAE 32.61103/4.72619 Fold 1 Epoch: 1 Loss 0.00349/0.00295 MAE 4.24089/3.74488 Fold 1 Epoch: 2 Loss 0.00319/0.00343 MAE 4.02212/4.33141 Fold 1 Epoch: 3 Loss 0.00337/0.00321 MAE 4.12612/4.13233 Fold 1 Epoch: 4 Loss 0.00335/0.00331 MAE 4.11586/4.12983 Fold 1 Epoch: 5 Loss 0.00331/0.00242 MAE 4.12359/3.43066 Fold 1 Epoch: 6 Loss 0.00284/0.00223 MAE 3.74762/3.20836 Fold 1 Epoch: 7 Loss 0.00304/0.00358 MAE 3.94653/4.48110 Fold 1 Epoch: 8 Loss 0.00309/0.00267 MAE 3.93305/3.63162 Fold 1 Epoch: 9 Loss 0.00294/0.00307 MAE 3.81775/4.06164 Fold 1 Epoch: 10 Loss 0.00328/0.00261 MAE 4.04670/3.56259 Fold 1 Epoch: 11 Loss 0.00288/0.00282 MAE 3.73693/3.61342 Fold 1 Epoch: 12 Loss 0.00333/0.00359 MAE 4.13195/4.32717 Fold 1 Epoch: 13 Loss 0.00287/0.00659 MAE 3.75797/5.60564 Fold 1 Epoch: 14 Loss 0.00339/0.00453 MAE 4.15032/4.86328 Fold 1 Epoch: 15 Loss 0.00353/0.00345 MAE 4.10001/3.99001 Fold 1 Epoch: 16 Loss 0.00307/0.00788 MAE 3.90741/6.40011 Fold 1 Epoch: 17 Loss 0.00310/0.00469 MAE 3.91649/5.00629 Fold 1 Epoch: 18 Loss 0.00318/0.00310 MAE 3.98748/3.99643 Fold 1 Epoch: 19 Loss 0.00331/0.00519 MAE 4.05921/4.83937

INFO:paddle.utils.download:unique_endpoints {''}

INFO:paddle.utils.download:File /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams md5 checking...

INFO:paddle.utils.download:Found /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams

Fold 2 Epoch: 0 Loss 0.89342/0.01459 MAE 33.90798/8.50453 Fold 2 Epoch: 1 Loss 0.00967/0.01136 MAE 6.48405/8.91911 Fold 2 Epoch: 2 Loss 0.00762/0.00588 MAE 6.04389/5.51903 Fold 2 Epoch: 3 Loss 0.00479/0.00574 MAE 4.56526/5.39814 Fold 2 Epoch: 4 Loss 0.00441/0.00274 MAE 4.38107/3.75544 Fold 2 Epoch: 5 Loss 0.00375/0.00717 MAE 3.94084/6.64185 Fold 2 Epoch: 6 Loss 0.00503/0.00264 MAE 4.61859/3.44157 Fold 2 Epoch: 7 Loss 0.00340/0.00275 MAE 3.60434/3.67714 Fold 2 Epoch: 8 Loss 0.00367/0.00371 MAE 3.68822/4.48952 Fold 2 Epoch: 9 Loss 0.00342/0.00277 MAE 3.76756/3.29718 Fold 2 Epoch: 10 Loss 0.00452/0.00296 MAE 3.90251/3.59065 Fold 2 Epoch: 11 Loss 0.00386/0.00302 MAE 3.70734/3.85156 Fold 2 Epoch: 12 Loss 0.00408/0.00510 MAE 3.85178/4.45351 Fold 2 Epoch: 13 Loss 0.00499/0.00455 MAE 3.99566/4.02180 Fold 2 Epoch: 14 Loss 0.00450/0.00469 MAE 3.78992/4.03149 Fold 2 Epoch: 15 Loss 0.00554/0.00272 MAE 3.99448/3.45180 Fold 2 Epoch: 16 Loss 0.00457/0.00518 MAE 3.86668/4.97044 Fold 2 Epoch: 17 Loss 0.00441/0.00487 MAE 4.12124124/5.17729 Fold 2 Epoch: 18 Loss 0.00441/0.00375 MAE 4.32277/3.95233 Fold 2 Epoch: 19 Loss 0.00430/0.00609 MAE 4.03132/5.22334

INFO:paddle.utils.download:unique_endpoints {''}

INFO:paddle.utils.download:File /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams md5 checking...

INFO:paddle.utils.download:Found /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams

Fold 3 Epoch: 0 Loss 0.86947/0.00410 MAE 29.16706/4.85964 Fold 3 Epoch: 1 Loss 0.00321/0.00250 MAE 4.00056/3.50814 Fold 3 Epoch: 2 Loss 0.00297/0.00398 MAE 3.83083/4.34380 Fold 3 Epoch: 3 Loss 0.00311/0.00372 MAE 3.87553/4.51552 Fold 3 Epoch: 4 Loss 0.00281/0.00264 MAE 3.71402/3.64536 Fold 3 Epoch: 5 Loss 0.00287/0.00243 MAE 3.75822/3.52269 Fold 3 Epoch: 6 Loss 0.00272/0.00330 MAE 3.63198/4.33096 Fold 3 Epoch: 7 Loss 0.00293/0.00365 MAE 3.79156/4.50453 Fold 3 Epoch: 8 Loss 0.00314/0.00350 MAE 3.89145/4.27802 Fold 3 Epoch: 9 Loss 0.00312/0.00320 MAE 3.94531/4.05098 Fold 3 Epoch: 10 Loss 0.00284/0.00602 MAE 3.72679/5.85368 Fold 3 Epoch: 11 Loss 0.00328/0.00340 MAE 3.99917/4.22787 Fold 3 Epoch: 12 Loss 0.00309/0.00563 MAE 3.93031/4.72741 Fold 3 Epoch: 13 Loss 0.00316/0.00429 MAE 3.91007/4.63886 Fold 3 Epoch: 14 Loss 0.00315/0.00721 MAE 3.92436/6.64559 Fold 3 Epoch: 15 Loss 0.00361/0.00663 MAE 4.15742/6.57676 Fold 3 Epoch: 16 Loss 0.00410/0.00581 MAE 4.51585/5.81121 Fold 3 Epoch: 17 Loss 0.00341/0.01221 MAE 4.08997/7.85437 Fold 3 Epoch: 18 Loss 0.00451/0.00557 MAE 4.74087/5.32913 Fold 3 Epoch: 19 Loss 0.00360/0.00419 MAE 4.24454/4.55999

INFO:paddle.utils.download:unique_endpoints {''}

INFO:paddle.utils.download:File /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams md5 checking...

INFO:paddle.utils.download:Found /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams

Fold 4 Epoch: 0 Loss 0.85392/0.00368 MAE 32.96611/4.24245 Fold 4 Epoch: 1 Loss 0.00256/0.00277 MAE 3.46177/3.51331 Fold 4 Epoch: 2 Loss 0.00221/0.00202 MAE 3.17338/2.95567 Fold 4 Epoch: 3 Loss 0.00214/0.00304 MAE 3.10563/3.88381 Fold 4 Epoch: 4 Loss 0.00219/0.00233 MAE 3.16203/3.24165 Fold 4 Epoch: 5 Loss 0.00242/0.00257 MAE 3.35008/3.49035 Fold 4 Epoch: 6 Loss 0.00221/0.00203 MAE 3.20184/3.08044 Fold 4 Epoch: 7 Loss 0.00224/0.00191 MAE 3.21450/2.89856 Fold 4 Epoch: 8 Loss 0.00233/0.00359 MAE 3.27101/4.44508 Fold 4 Epoch: 9 Loss 0.00273/0.00342 MAE 3.56527/4.12814 Fold 4 Epoch: 10 Loss 0.00281/0.00290 MAE 3.67255/3.80147 Fold 4 Epoch: 11 Loss 0.00312/0.00324 MAE 3.91060/4.08256 Fold 4 Epoch: 12 Loss 0.00271/0.00222 MAE 3.55698/3.34696 Fold 4 Epoch: 13 Loss 0.00263/0.00498 MAE 3.54320/5.23288 Fold 4 Epoch: 14 Loss 0.00311/0.00403 MAE 3.89002/4.00476 Fold 4 Epoch: 15 Loss 0.00251/0.00276 MAE 3.47477/3.81179 Fold 4 Epoch: 16 Loss 0.00334/0.00247 MAE 4.04915/3.55583 Fold 4 Epoch: 17 Loss 0.00271/0.00490 MAE 3.76235/5.47203 Fold 4 Epoch: 18 Loss 0.00329/0.00277 MAE 3.96554/3.80042 Fold 4 Epoch: 19 Loss 0.00273/0.00291 MAE 3.72141/4.05549

INFO:paddle.utils.download:unique_endpoints {''}

INFO:paddle.utils.download:File /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams md5 checking...

INFO:paddle.utils.download:Found /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams

Fold 5 Epoch: 0 Loss 0.85670/0.00696 MAE 29.43666/4.68126 Fold 5 Epoch: 1 Loss 0.00806/0.02447 MAE 6.22143/12.01605 Fold 5 Epoch: 2 Loss 0.00842/0.00421 MAE 6.22863/4.50202 Fold 5 Epoch: 3 Loss 0.00348/0.00344 MAE 4.06411/4.23032 Fold 5 Epoch: 4 Loss 0.00327/0.00425 MAE 4.00236/4.06088 Fold 5 Epoch: 5 Loss 0.00315/0.00437 MAE 3.88867/4.45807 Fold 5 Epoch: 6 Loss 0.00306/0.00372 MAE 3.80572/3.98706 Fold 5 Epoch: 7 Loss 0.00300/0.00607 MAE 3.75447/5.70704 Fold 5 Epoch: 8 Loss 0.00292/0.00473 MAE 3.68646/4.21410 Fold 5 Epoch: 9 Loss 0.00291/0.00299 MAE 3.61322/3.81180 Fold 5 Epoch: 10 Loss 0.00281/0.00635 MAE 3.58025/5.52059 Fold 5 Epoch: 11 Loss 0.00317/0.00308 MAE 3.78247/3.53311 Fold 5 Epoch: 12 Loss 0.00280/0.00840 MAE 3.58892/4.92759 Fold 5 Epoch: 13 Loss 0.00343/0.00341 MAE 3.79564/3.84999 Fold 5 Epoch: 14 Loss 0.00303/0.00385 MAE 3.62843/3.90398 Fold 5 Epoch: 15 Loss 0.00297/0.00771 MAE 3.63470/5.68825 Fold 5 Epoch: 16 Loss 0.00326/0.00447 MAE 3.75003/4.07704 Fold 5 Epoch: 17 Loss 0.00298/0.00583 MAE 3.66024/4.84518 Fold 5 Epoch: 18 Loss 0.00341/0.01341 MAE 3.89222/6.48514 Fold 5 Epoch: 19 Loss 0.00414/0.00561 MAE 4.12554/4.13227

INFO:paddle.utils.download:unique_endpoints {''}

INFO:paddle.utils.download:File /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams md5 checking...

INFO:paddle.utils.download:Found /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams

Fold 6 Epoch: 0 Loss 0.86576/0.00339 MAE 30.12522/3.75524 Fold 6 Epoch: 1 Loss 0.01023/0.01223 MAE 6.79863/7.76517 Fold 6 Epoch: 2 Loss 0.00999/0.01130 MAE 7.03156/8.91614 Fold 6 Epoch: 3 Loss 0.00465/0.00230 MAE 4.86728/3.18715 Fold 6 Epoch: 4 Loss 0.00305/0.00539 MAE 3.86714/5.73368 Fold 6 Epoch: 5 Loss 0.00382/0.00243 MAE 4.43198/3.19708 Fold 6 Epoch: 6 Loss 0.00313/0.00766 MAE 3.91390/6.50056 Fold 6 Epoch: 7 Loss 0.00475/0.01015 MAE 4.94451/7.25125 Fold 6 Epoch: 8 Loss 0.00412/0.00246 MAE 4.52522/3.28646 Fold 6 Epoch: 9 Loss 0.00320/0.00284 MAE 3.93136/3.65408 Fold 6 Epoch: 10 Loss 0.00341/0.00302 MAE 3.97897/3.36249 Fold 6 Epoch: 11 Loss 0.00318/0.00353 MAE 3.75167/4.01775 Fold 6 Epoch: 12 Loss 0.00298/0.00281 MAE 3.71133/3.68553 Fold 6 Epoch: 13 Loss 0.00345/0.00428 MAE 3.89888/4.79099 Fold 6 Epoch: 14 Loss 0.00347/0.00558 MAE 3.93133/5.30763 Fold 6 Epoch: 15 Loss 0.00317/0.00307 MAE 3.80316/3.69749 Fold 6 Epoch: 16 Loss 0.00333/0.00299 MAE 3.76026/3.53186 Fold 6 Epoch: 17 Loss 0.00305/0.00340 MAE 3.65101/4.14215 Fold 6 Epoch: 18 Loss 0.00285/0.00335 MAE 3.62495/3.77899 Fold 6 Epoch: 19 Loss 0.00361/0.00384 MAE 3.81460/4.27685

步骤6:多折预测集成

test_pred = np.zeros((test_img.shape[0], 8))# 加载多折模型进行预测for idx in range(6):

model = make_model()

layer_state_dict = paddle.load(f"model_{idx}.pdparams")

model.set_state_dict(layer_state_dict)

model.eval()

test_pred += make_predict(model, test_loader) * 96test_pred /= 6

INFO:paddle.utils.download:unique_endpoints {''}

INFO:paddle.utils.download:File /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams md5 checking...

INFO:paddle.utils.download:Found /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams

INFO:paddle.utils.download:unique_endpoints {''}

INFO:paddle.utils.download:File /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams md5 checking...

INFO:paddle.utils.download:Found /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams

INFO:paddle.utils.download:unique_endpoints {''}

INFO:paddle.utils.download:File /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams md5 checking...

INFO:paddle.utils.download:Found /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams

INFO:paddle.utils.download:unique_endpoints {''}

INFO:paddle.utils.download:File /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams md5 checking...

INFO:paddle.utils.download:Found /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams

INFO:paddle.utils.download:unique_endpoints {''}

INFO:paddle.utils.download:File /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams md5 checking...

INFO:paddle.utils.download:Found /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams

INFO:paddle.utils.download:unique_endpoints {''}

INFO:paddle.utils.download:File /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams md5 checking...

INFO:paddle.utils.download:Found /home/aistudio/.cache/paddle/hapi/weights/resnet18.pdparams

idx = 42xy = test_pred[idx, :].reshape(-1, 2) plt.scatter(xy[:, 0], xy[:, 1], c='r') plt.imshow(test_img[idx, 0, :, :], cmap='gray')

<matplotlib.image.AxesImage at 0x7f7e98a3fe50>

/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/matplotlib/image.py:425: DeprecationWarning: np.asscalar(a) is deprecated since NumPy v1.16, use a.item() instead a_min = np.asscalar(a_min.astype(scaled_dtype)) /opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/matplotlib/image.py:426: DeprecationWarning: np.asscalar(a) is deprecated since NumPy v1.16, use a.item() instead a_max = np.asscalar(a_max.astype(scaled_dtype))

<Figure size 432x288 with 1 Axes>